collect_dags_from_db ( self ) ¶Ĭollects DAGs from database. Un-anchored regexes, not shell-like glob patterns. Ignoring files that match any of the regex patterns specified The directory, it will behave much like a. airflowignore file is found while processing Imports them and adds them to the dagbag collection. Given a file path or a folder, this method looks for python modules, Throws AirflowDagCycleException if a cycle is detected in this dag or its subdags collect_dags ( self, dag_folder=None, only_if_updated=True, include_examples=conf.getboolean('core', 'LOAD_EXAMPLES'), safe_mode=conf.getboolean('core', 'DAG_DISCOVERY_SAFE_MODE') ) ¶ Session ( ) – DB session.īag_dag ( self, dag, parent_dag, root_dag ) ¶Īdds the DAG into the bag, recurses into sub dags. Zombies ( _processing.SimpleTaskInstance) – zombie task instances to kill. Had a heartbeat for too long, in the current DagBag. kill_zombies ( self, zombies, session=None ) ¶įail given zombie tasks, which are tasks that haven’t The module and look for dag objects within it. Given a path to a python module or zip file, this method imports Process_file ( self, filepath, only_if_updated=True, safe_mode=True ) ¶

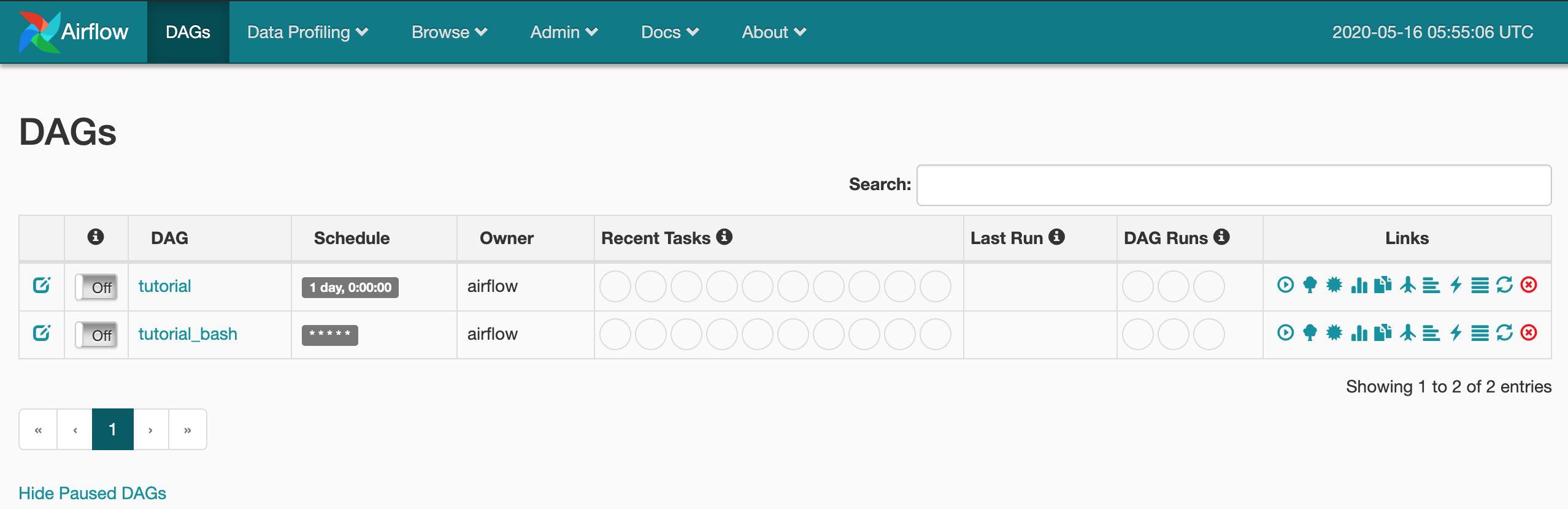

Similar issues reported in this post as well here. My suggestion would be right now to try and restart the web server (via the installation of some dummy package). Gets the DAG out of the dictionary, and refreshes it if expired Parametersįrom_file_only ( bool) – returns a DAG loaded from file. This issue means that the Web Server is failing to fill in the DAG bag on its side - this problem is most likely not with your DAG specifically. The amount of dags contained in this dagbag get_dag ( self, dag_id, from_file_only=False ) ¶ If False DAGs are read from python files.ĬYCLE_NEW = 0 ¶ CYCLE_IN_PROGRESS = 1 ¶ CYCLE_DONE = 2 ¶ DAGBAG_IMPORT_TIMEOUT ¶ UNIT_TEST_MODE ¶ SCHEDULER_ZOMBIE_TASK_THRESHOLD ¶ dag_ids ¶ size ( self ) ¶ Returns Store_serialized_dags ( bool) – Read DAGs from DB if store_serialized_dags is True. Therefore only once per DagBag is a file logged This is to prevent overloading the user with logging

Has_logged – an instance boolean that gets flipped from False to True after aįile has been skipped. Include_examples ( bool) – whether to include the examples that ship Settings are now dagbag level so that one system can run multiple,ĭag_folder ( unicode) – the folder to scan to find DAGsĮxecutor – the executor to use when executing task instances The Datadog Agent collects many metrics from Airflow, including those for: DAGs (Directed Acyclic Graphs): Number of DAG processes, DAG bag size, etc. This makes it easier to runĭistinct environments for say production and development, tests, or forĭifferent teams or security profiles. Level configuration settings, like what database to use as a backend and DagBag ( dag_folder=None, executor=None, include_examples=conf.getboolean('core', 'LOAD_EXAMPLES'), safe_mode=conf.getboolean('core', 'DAG_DISCOVERY_SAFE_MODE'), store_serialized_dags=False ) ¶īases: _dag.BaseDagBag, _mixin.LoggingMixinĪ dagbag is a collection of dags, parsed out of a folder tree and has high # The DAG object we'll need this to instantiate a DAGįrom import PythonOperatorįrom import DummyOperatorįrom tasks.get_configs import get_configsįrom tasks.get_targets import get_targetsįrom tasks.Module Contents ¶ class. The import sequence of tasks in the dag file are as follows: from datetime import timedelta push_targets.py (speedtest is imported here) So, how do I diagnose this? Seems like a package import problem of some sortĮdit: If it helps here is my directory and import structure for my_dag.py - airflow I even went to the exact line of speedtest.py as mentioned Broken DAG error, line 156, it seems fine and runs fine when I put in in the python interpreter. usr/local/lib/python3.7/site-packages/airflow/utils/decorators.py:94 DeprecationWarning: provide_context is deprecated as of 2.0 and is no longer required _p圓_utf8_stdout = _P圓Utf8Output(sys.stdout)įile "/usr/local/lib/python3.7/site-packages/speedtest.py", line 166, in _init_ĪttributeError: 'StreamLogWriter' object has no attribute 'fileno'įor sanity checks I did run the following and saw no problems: ~# python airflow/dags/my_dag.py ModuleNotFoundError: No module named '_builtin_'ĭuring handling of the above exception, another exception occurred:įile "/usr/local/lib/python3.7/site-packages/speedtest.py", line 179, in In my Python 3.7 environment I have installed Airflow 2, speedtest-cli and few other things using pip and I keep seeing this error popup in the Airflow UI: Broken DAG: Traceback (most recent call last):įile "/usr/local/lib/python3.7/site-packages/speedtest.py", line 156, in

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed